There is one codec unrivaled in its monstrosity. I’m talking about GoToMeetingAndNeverReturn.

First of all, look at its size. The oldest version I could find (it decodes only G2M2 and G2M3) occupies 6196600 bytes. Newer one with G2M4 support (and that’s an additional compressor too) is 8247160 bytes, Current version (with seemingly the same functionality) is 15665528 bytes.

What does it contain? Everything. I kid you not, All (maybe except one) GoToUnpleasantPlace utilities refer this small file and actually export their real main() function out from it. And their size is about forty kilobytes each.

What does it contain from code point of view? Some libraries for networking, cryptography, speech codecs library, libjpeg, zlib, some internal code and tons of C++-induced crap. When you see wrapper calling wrapper calling wrapper calling something trivial (and the wrappers just pass arguments as is and do nothing else), or when you see that 90% of any function is used for exception handling, then we’re talking about enterprise-grade C++ indeed. And of course absolute bloatedness (including making indirect calls where unneccessary) and inability to run on Linux (not with Wine or MPlayer2 loader) greatly help debugging.

Now to the codec details: every frame consists of chunks which hold different kind of data — frame information, mouse cursor shape, mouse cursor position, image data, etc. Frame is divided into tiles (usually 8×8 tiles in frame) and each tile is coded separately.

There are two compression methods, known by their numbers stored in frame header. For G2M2 and G2M3 it’s compression method 2, for G2M4 it’s compression method 3. Both are horrible.

Method 2 seems to have some completely unrelated submethods.

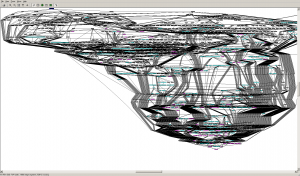

Here’s the call graph from method 2 decompression function.

Here’s the call graph from method 2 decompression function.

Looks like there are several possibilities when coding with this method.

There is a possibility to use JPEG compression but I don’t know under what circumstances it’s used.

Also I’ve discovered that MSA1(MSS3) and MTS2(MSS4) actually use standard recommended quantisation tables from ITU T.81 Appendix K.1 (they are not used by libavcodec JPEG decoder though) and quality to quantisation mapping from libjpeg6.

Another coding method employs ELS codes I’ve described in the previous post and uses it to code some bit plane and RGB triplet in dependent form (i.e. with prediction from two previous pixels and using R-G, G, B-G components).

For method 3 so far I know only a few facts. First byte contains image type and depending on it decoding may vary a bit. For example, for image type 0 and 3 three following bytes contain some RGB value, other image types don’t have it. And internally it’s called MPCCoder or SMPCCoder.

In conclusion I want to say that this codec is too large and horrible to use only static reverse-engineering (because it’s very hard even to determine what is the next function called indirectly) and debugging it requires Windows, so don’t expect me to RE it. But if you do you’ll get many thanks and some money from these guys. Good luck!