As I mentioned in the previous post, I’m polishing NihAV and improving some bits here and there. In this post I’d like to describe what has been done and what should be done (but not necessarily will be done).

Read the rest of this entry »

NihAV: Boring Details

December 14th, 2019The Unfinished Puzzle from Monty Python and the Quest for the Holy Grail

November 27th, 2019In the previous post I’ve mentioned that one of the levels contain an unfinished witch puzzle probably based on Match Two that also contains various photos of the (presumably team and their families (about a dozen of them actually). I also wanted to tell a bit more about it and since I’m working on boring parts of NihAV and it will take a while, why not tell the story now?

Read the rest of this entry »

Railways of Baden and Württemberg

November 17th, 2019First of all, unlike Rheinland Palatine railways I’ve not travelled on all local railways here because of certain geographic peculiarities it takes less time to get to the farthest corners of Rheinland-Pfalz from here than to places around Bodensee. And I can’t visit all historic railways either because one of them is located in the middle of nowhere with the only connection being a bus that comes there maybe twice a day.

Nevertheless, I’ve seen most of them and here I’d like to describe my impressions about them.

Read the rest of this entry »

NihAV: the Last Quack

October 31st, 2019Finally NihAV got full-feature VP7 decoding support (well, except one very exotic case for a very exotic mode) so now I can move to other things like actually making various decoders bit-exact, fixing other bugs in them, adding missing pieces of code for player and even documenting stuff. I hope to give a presentation of my work on VDD 2020 or FOSDEM 2021 (whichever accepts it) and I want to have something decent to present by then.

Anyway, here’s a review of VP7.

Read the rest of this entry »

Going to the 7th Level for the Grail!

September 28th, 2019I’ve spent two days on something that I wanted to do long time ago but postponed because of various reasons including complexity. But now thanks to Ghidra I’ve finally managed to pry into resources of Monty Python and the Quest for the Holy Grail and decode videos (and more!) stored there.

Probably it was VP7 (again) that made me look into it, but since Ghidra has decompiler for 16-bit code as well, I’ve tried it on libraries of surprisingly small game engine (pity that ScummVM does not even plan to support it but I’m in a similar situation with codecs). And what do you know, they have a special library dealing with resource files that included unpacking images (but not audio—there’s audio library for that).

First, let’s talk about resource file organisation and why it baffled me for too long. The files are organised into several chunks: header that contains offsets of the other chunks at the end of it (around bytes 0xE2..0x141), then you have actual table of contents (more about it later), then some small binary data chunk, then list of strings related to file names, then some chunk that looks like game script (in binary form), then palette and finally another list of strings that looks like variables list. It is no wonder I could not do anything useful with it since actual table of contents is hidden somewhere near the end of file but not exactly at the end. Also each game scene corresponds to its own archive which makes it clearer what to expect there (previous version used in Monty Python’s Complete Waste of Time even has a description right in the header and table of contents stored right after it, I might look at it later but no promises).

Now, files in the resource archive. The catalogue has only file type, its size and offset in the archive and nothing else. There are more than a dozen file types, 1 being images (both background and sprites), 2 being movies (the thing I was hunting), 4 is for MIDI tracks, 9 is for digitised audio, the rest I don’t care about.

Let’s move to simple things like music and audio. Music is stored in its custom format with four bytes per command except when high bit is set (then it’s delta time for next command). Why so? Probably because it’s being played by sending single MIDI commands and at least on Windows it requires packing them into 32-bit word anyway. Audio is stored in files with some header and either raw form or with IMA ADPCM compression. The notable thing is that it does not use any multiplications in any form—instead it has precomputed table for all eight possible values for each step.

Images are another interesting topic. First of all, palette is stored as 944-byte file with 32-bit entries (so it’s just 236 colours) but somehow decoded files use it with bias of ten, i.e. decoded index 13 corresponds to palette entry 3, index 27 is for entry 17 etc etc. I have no idea what it does with first colours in the palette (though sometimes images are not colorised properly so maybe they need different palette bias or a different palette at all).

Second, images employ two different compression schemes: RLE and LZW. RLE is rather trivial except that it uses opcode zero to signal end of line (and double zero for end of image):

LZW-compressed image splits image into chunks that may be uncompressed or compressed with LZW using 10, 11 or 12 bits per index. And after all these years I was still able to correctly guess it was LZW and write a decoder that worked fine without resorting to any documentation or even studying the decompiled code (I just needed to realize that it reads sequences of n-bits indexes and does something with them to output bytes).

And finally the movie format. Those are actually stored in two files—movie data and companion frame index (i.e. frame type, size and offset). After extracting frames I could not realize what to do with them until I noticed that frame type 2 all have the constant size of 11025 except for first few. Obviously they turned out to be IMA ADPCM compressed audio frames (and since they were the first frames in every movie it was hard to guess the format looking at semi-random data without header). Frame type 0 turned out to be the same image format as normal pictures.

Frame type 1 turned out to be inter-frames that use index 0 for pixels that should not be updated.

Unfortunately even if I now have a way to decode the files I cannot add direct support for them in NihAV since it requires at least three different files to play it (palette, frame index and frame data). At least now I know I can transcode them into something when the need arises.

Now let’s talk about Ghidra a bit. Without it this probably would have not happened since I’m not that young to spend time on translating tons of disassembly (and REC failed to do a good job). Ghidra support for 16-bit code is far from perfect with all that mess with far pointers consisting of two 16-bit words, external DLL functions linked by ordinals (so I had to match them by hoof and rename where possible) and general confusion with int treated as 4-byte in some contexts but 2-byte in another. And yet it did the job and helped me to understand how the game libraries work and that’s what really counts.

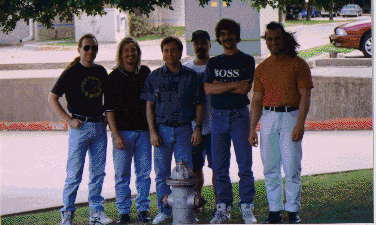

And as a bonus here’s a team photo extracted from concentration mini-game related to the witch scene (not the Simon Says clone with fires but probably Match Two clone that I’ve never seen in-game before):

MidiVid codec family

September 26th, 2019VP7 is such a nice codec that I decided to distract myself a little with something else. And that something else turned out to be MidiVid codec family. It turned out to be quite peculiar and somehow reminiscent of Duck codecs.

The family consists of three codecs:

- MidiVid — the original codec based on LZSS and vector quantisation;

- MidiVid Lossless — exactly what is says on a tin, based on LZSS and bunch of other technologies;

- MidiVid 3 — a codec based on simplified integer DCT and single codebook for all values.

I’ve actually added MidiVid decoder to NihAV because it’s simple (two hundred lines including boilerplate and tests) and way more fun than working on VP7 decoder. Now I’ll describe them and hopefully you’ll understand why it reminds me of Duck codecs despite not being similar in design.

MidiVid

This is a simple hold-and-modify video codec that had been used in some games back in PS2/Xbox era. The frame data can be stored either unpacked or packed with LZSS and it contains the following kinds of data: change mask for 8×8 blocks (in case of interframe—if it’s zero then leave block as is, otherwise decode new data for it), 4×4 block codebook data (up to 512 entries), high bits for 9-bit indices (if we have 257-512 various blocks) and 8-bit indexes for codebook.

The interesting part is that LZSS scheme looked very familiar and indeed it looks almost exactly like lzss.c from LZARI author (remember that? I still do), the only differences is that it does not use pre-filled window and flags are grouped into 16-bit word instead of single byte.

MidiVid Lossless

This one is a special best as it combines two completely different compression methods: the same LZSS as before and something used by BWT-based compressor (to the point that frame header contains FTWB or ZTWB IDs).

I’m positively convinced it was copied from some BTW-based compressor not just because of those IDs but also because it seems to employ the same methods as some old BTW-based compressor except for the Burrows–Wheeler transform itself (that would be too much for the old codecs): various data preprocessing methods (signalled by flags in the frame header), move-to-front coding (in its classical 1-2 coding form that does not update first two positions that much) plus coding coefficients in two groups: first just zero/one/large using order-3 adaptive model and then values larger than one using single order-1 adaptive model. What made it suspicious? Preprocessing methods.

MVLZ has different kinds of preprocessing methods: something looking like distance coding, static n-gram replacement, table prediction (i.e. when data is treated as series of n-bit numbers and the actual numbers are replaced with the difference between previous ones) and x86 call preprocessing (i.e. that trick when you change function call address from relative into absolute for better compression ratio and then undo it during decompression; known also as E8-preprocessing because x86 call opcode is E8 <32-bit offset> and it’s easy to just replace them instead of adding full disassembler to the archiver). I had my suspicions as n-gram replacement (that one is quite stupid for video codecs and it only replaces some values with some binary values that look more related to machine code than video) but the last item was a dead give-away. I’m pretty sure that somebody who knows open-source BWT compressors of late 1990s will probably recognize it even from this description but sadly I’ve not been following it that closely being more attracted to multimedia.

MidiVid 3

This codec is based on some static codebook for packing all values: block types, motion vectors and actual coefficients. Each block in macroblock can be coded with one of four modes: empty (fill with 0x80 in case of intra), DC only, just few coefficients DCT, and full DCT. As usual various kinds of data are grouped and coded as single array.

Motion compensation is full-pixel and unlike its predecessor it operates in YUV420 format.

This was an interesting detour but I have to return back to failing to start writing VP7 decoder.

P.S. I’ll try to document them with more details in the wiki soon.

P.P.S. This should’ve been a post about railways instead but I guess it will have to wait.

AVC support in NihAV: semi-done

September 14th, 2019I’ve wasted enough time on AVC decoder for On2 family so while it’s not working properly for those special cases I’m moving to VP7 regardless.

For those who don’t know (or forgot; or never had a reason to care) On2 AAC is AAC-LC rip-off with some creative reconstruction modes added to the usual long/short windows. I’ve failed to understand how it works before and I fail to understand how it works still. But at least some details are a bit clearer now that I’ve analysed the whole codec from scratch with less guesswork.

The codec has three IDs that it recognizes: 0x500, 0x501 and 0x1234. First two are different only in the aspect that one handles singular packets and another one handles several packets glued together prefixed with size. The last ID is simply recognized but it does not have any special handling.

The tricky part is some special modes that do some heavy processing of data. For most modes you invoke IMDCT and that’s all, here you do some QMF-like filtering (probably for transients extraction), then you perform RDFT (previously I thought it was plain FFT but after long investigation it turned out to be RDFT after all) on quarters, merge those quarters using filters that look like convolution filters for four sub-bands, perform RDFT again on the whole block and add some transients. And after that you still may need to reverse the data before using permuted window for overlap-add operation. In other words it’s not fun and I lack education for recognizing all those algorithms used, why they’re used and where it goes wrong.

So hopefully I’ll return to it some day to fix it for good but now VP7 awaits (so I can at least formally declare Duck codecs family done and move to implementing missing bits in the framework itself).

Dingo Pictures: A Book

August 24th, 2019When I think I’ve learned enough about Dingo Pictures there’s something new appears. From Toys review I’ve learned that Roswitha Haas also published a book (or maybe several) that are directly related to the subject at hoof. And of course being curious I’ve decided to buy it.

Amazon mentions two other books—an adaptation of Land Before Time and Jimmy Button but I dared not to buy them (one has definitely nothing to do with Dingo Pictures and I fear to find out that the first one is not about Tio).

Anyway, let’s look at the alternative version of King of the Animals Part 2 (spoiler: it’s still much better than Disney remake).

NihAV: still ducking

July 27th, 2019While it’s summer and I’d rather travel around (or suffer from heat when I can’t), there has been some progress on NihAV. Now I can decode VP5 and VP6 files. Reconstruction still sucks because it takes a lot of effort to make perfect reconstruction and I’m too lazy to do that when simple demonstration that the decoder works would suffice.

Anyway, now I can decode both VP5 and VP6 files including interlaced ones. Interlacing in VP5/6 is done in very simple way like many other codecs: there’s a bit for each macroblock telling whether macroblock should be output in interlaced form or not.

Of course this being VPx family, they had to do it with some creativity. First you decode base interlaced bit probability, which is stored as 8-bit value while all other bit probabilities are stored in 7 bits. Then you derive actual probability for interlaced bit and decode it before any other macroblock information (including macroblock type—it’s that important). Probability is derived by companding base probability depending on whether last macroblock was interlaced (then probability is halved) or not (then it’s remapped to fit 128-255 range)—except for the first macroblock in a row which would use the base probability without modifications. And for VP6 you also have to use different starting scan order (band assignment for each coefficient, now it’s shuffled). This is so trivial that one would wonder why this has not been done in libavcodec decoder yet.

There are three possible things to do next: polish current implementation, move to AVC (On2 AVC that is) or move to AVC (Duck VP7 which is AVC ripoff). But probably I’ll simply keep doing nothing instead.

Auf der Pälzische Eisenbahne…

June 16th, 2019… gibt’s gar viele Haltstatione,

Kowelenz, Bingen, Bad Kreuznach, Lautre und Waldfischbach.

Ehm, sorry, wrong federal land for that song.

I think I can call my hobby project of travelling on all rail lines in Rheinland-Pfalz that are still in service complete.

Read the rest of this entry »