Since I still have nothing better to do I decided to look at ALF2CD audio codec. And it turned out to be weird.

The codec is remarkable since while it seems to be simple transform+coefficient coding it does that in its own unique way: transform is some kind of integer FFT approximation and coefficient coding is done with CABAC-like approach. Let’s review all details for the decoder as much as I understood them (so not much).

Framing. Audio is split into sub-frames for middle and side channels with 4096 samples per sub-frame. Sub-frame sizes are fixed for each bitrate: for 512kbps it’s 2972 bytes each, for 384kbps it’s 2230 bytes each, for 320kbps it’s 2230/1486 bytes, for 256kbps it’s 1858/1114 bytes. Each sub-frame has the following data coded in it: first and last 16 raw samples, DC value, transform coefficients.

Coding. All values except for transform coefficients are coded in this sequence: non-zero flag, sign, absolute value coded using Elias gamma code. Transform coefficient are coded in bit-slicing mode: you transmit the lengths of region that may have 0x100000 set in their values plus bit flags to tell which entries in that actually have it set, then the additional length of region that may have 0x80000 set etc etc. The rationale is that larger coefficients come first so only first N coefficients may be that large, then N+M coefficients may have another bit set down below to bit 0. Plus this way you can have coarse or fine approximation of the coefficients to fit the fixed frame size without special tricks to change the size.

Speaking of the coder itself, it is context-adaptive binary range coder but not exactly CABAC you see in ITU H.26x codecs. It has some changes, especially in the model which is actually a combination of several smaller models in the same space and in the beginning of each sub-model you have to flip MPS value and maybe transition to some other sub-model. I.e. a single model is a collection of fixed probabilities of one/zero appearing and depending on what bit we decoded we move to another probability that more suits it (more zeroes to expect or more ones to expect). In H.26x there’s a single model for it, in ALF2CD there are several such models so when you hit the edge state aka “expect all ones or all zeroes” you don’t simply remain in the state but may transition to another sub-model with a different probabilities for expected ones-zeroes. A nice trick I’d say.

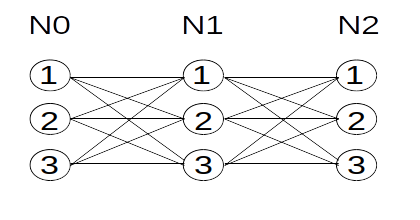

Coder also maintains around 30 bit states: state 0 is for coding non-zero flags, state 1 is for coding value sign, states 2-25 are for coding value exponent and state 26 is for coding value mantissa (or it’s states 2-17 for exponent and state 18 for mantissa bits when we code lengths of transform coefficient regions).

Reconstruction. This is done by performing inverse integer transform (which looks like FFT approximation but I’ve not looked at it that close), replacing first and last 16 coefficients with previously decoded ones (probably to deal with effects of windowing or imperfect reconstruction), and finally undoing mid/stereo for both sub-frames.

Overall it’s an interesting codec since you don’t often see arithmetic coding employed in lossy audio codecs unless they’re very recent ones of BSAC. And even then I can’t remember any audio codec using binary arithmetic coder instead of multi-symbol models. Who knows, maybe this approach will be used once again as something new. Most of those new ideas in various codecs have been implemented before after all (e.g. spatial prediction in H.264 is just a simplified version of spatial prediction in WMV2 X8-frames and quadtrees were used quite often in the 90s before reappearing in H.265; the same way Opus is not so modern if you know about ITU G.722.1 and heard that WMA Voice could have WMA Pro-coded frames in its stream).